[LLM Sponsor Detection Benchmark]

March 25, 2026

Cutio uses LLMs to detect sponsor segments in YouTube videos in real-time. Model choice directly affects user experience: a false positive skips actual content, a false negative forces the user to sit through an ad. We need models that are accurate, fast, cheap, and reliable — but these goals are in tension. This benchmark evaluates 5 models on detecting and classifying sponsored and self-promotional segments across 65 videos spanning diverse categories and creators, quantifying the trade-offs.

The task is harder than it looks. Sponsors are often weaved into content naturally (stealth integration), creators do meta-commentary about their own ads, and self-promo segments can look identical to sponsored reads. Ground truth is built via multi-model consensus from three frontier LLMs with judge arbitration — the prompt encodes a decision tree that forces models to reason about context before classifying.

> Multi-Model Consensus Ground Truth

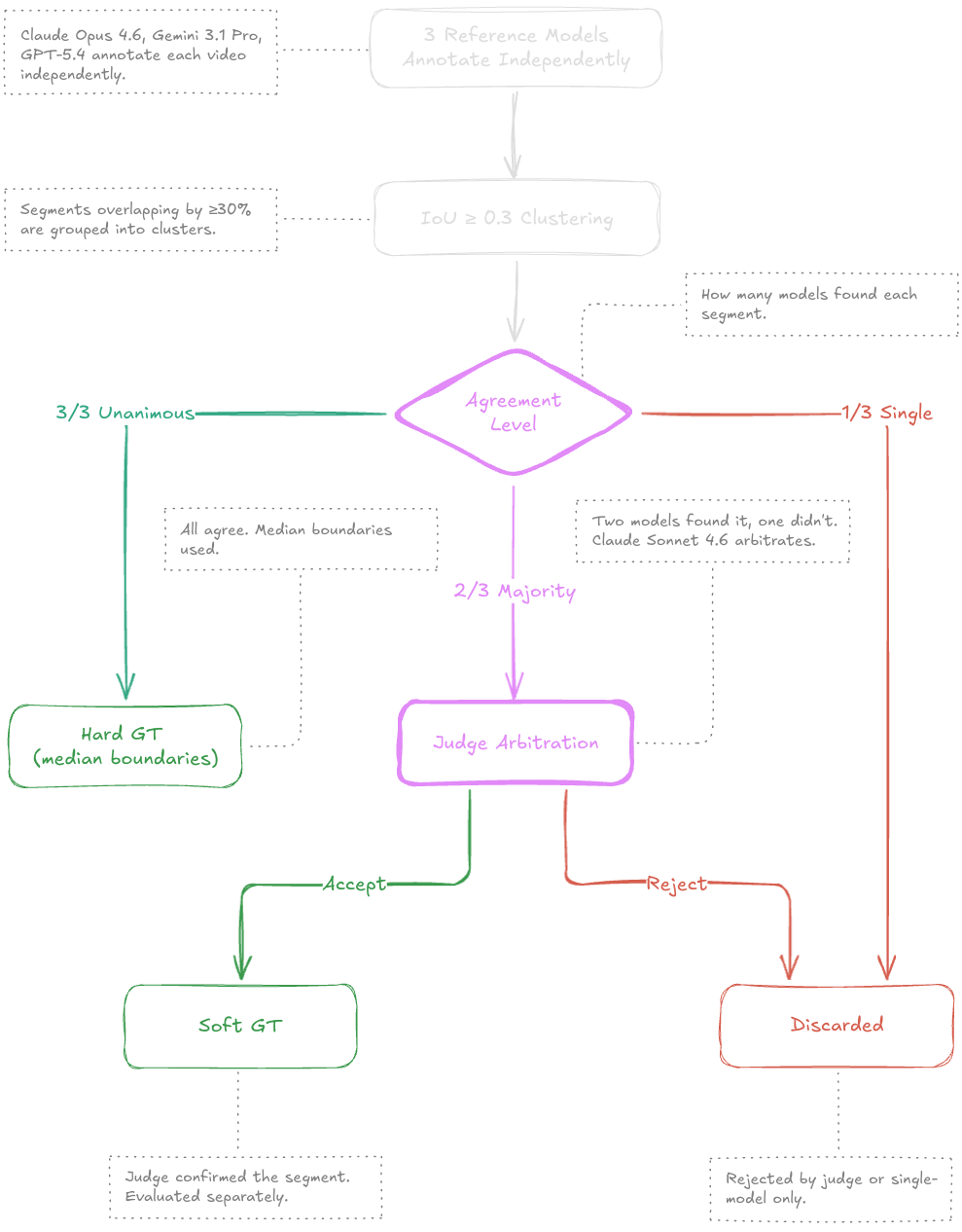

Building reliable ground truth for ad-segment detection is hard — human annotation is expensive, slow, and subjective. We use a multi-model consensus protocol that combines three frontier-class LLMs as independent annotators with a fourth model as a tiebreaker judge.

Reference models: Claude Opus 4.6, Gemini 3.1 Pro, and GPT-5.4 annotate every video independently with extended thinking enabled. Overlapping segments are clustered across models using IoU ≥ 0.3.

Tiered confidence: Segments where all three models agree become hard ground truth (median boundaries). Where only a majority agrees, Claude Sonnet 4.6 acts as a blind judge — receiving the transcript fragment and anonymized annotations without knowing which model produced which. The judge's verdict becomes soft ground truth. Single-model detections are discarded.

Inter-annotator agreement measured via Krippendorff's alpha reaches α = 0.856 — well above the 0.8 threshold typically considered reliable. 21 segment clusters were disputed and discarded.

Total segments

95

Hard (unanimous)

87

Soft (judged)

8

Krippendorff's α

0.856

> Prompt Design

Each model receives the same structured prompt: video metadata (author, title, category, description, duration) plus the full timestamped transcript. The prompt encodes a decision tree that forces sequential reasoning:

- • Q1. Is this promotional language sincere? → ironic / skit → skip

- • Q2. Does the video exist for this purpose? → primary topic → skip

- • Q3. Third-party brand + CTA? → sponsor

- • Q4. Creator's own stuff in >5s block? → self-promo

The output is structured JSON validated with Zod — segment start/end in integer seconds with category classification. An Anthropic-specific workaround removes minimum constraints from the schema (Claude rejects them), then re-validates locally with the strict schema.

> Evaluation Protocol

Matching: Predicted segments are matched to ground truth using greedy IoU-based assignment. All candidate pairs with IoU ≥ 0.5 are sorted by IoU descending; the best pair is matched first, then both segments are removed from further consideration. A match also requires correct category classification.

Metrics: F1 is micro-averaged across all videos. Timing accuracy is measured by MAE (Mean Absolute Error) of start and end boundaries for matched pairs. Mean IoU captures temporal overlap quality. Bootstrap confidence intervals (1000 iterations, block-resampled by video) provide uncertainty estimates.

Hard / Soft split: Metrics are reported separately for high-confidence (unanimous) and low-confidence (judge-arbitrated) segments, revealing how models perform on clear-cut versus ambiguous cases.

> Overall Ranking

The table below ranks models by F1 score with 95% bootstrap confidence intervals. Note that CI widths of ~12–16 pp mean differences between the top models are not statistically significant — treat relative rankings with caution.

| Model | F1 | F1 (hard) | Precision | Recall | Cost/Video | Latency (med) | MAE Start | MAE End | Mean IoU |

|---|---|---|---|---|---|---|---|---|---|

| gemini-3.1-flash-lite | 85.4% [78.6%–91.4%] | 85.9% | 87.8% | 83.2% | $0.0057 | 4.8s | 3.9s | 2.0s | 87.8% |

| gemini-3-flash | 85.1% [76.8%–92.2%] | 84.5% | 83.0% | 87.4% | $0.0077 | 2.9s | 2.9s | 2.0s | 89.1% |

| qwen-3.5-flash | 84.6% [77.6%–91.3%] | 85.1% | 88.5% | 81.0% | $0.0023 | 8.1s | 3.8s | 1.9s | 87.2% |

| gpt-5.4-nano | 82.4% [75.8%–89.2%] | 80.6% | 78.8% | 86.3% | $0.0036 | 6.4s | 5.1s | 1.5s | 85.7% |

| claude-haiku-4.5 | 81.1% [72.5%–89.0%] | 83.7% | 85.9% | 76.8% | $0.0178 | 2.6s | 4.0s | 2.3s | 87.3% |

> Detection Accuracy

F1 score captures the balance between precision and recall. Mean IoU shows how precisely predicted segment boundaries overlap with ground truth — a model can correctly detect a segment (high F1) while placing boundaries poorly (low IoU).

> F1 Score

Harmonic mean of precision and recall — the primary ranking metric.

> Precision vs Recall

Conservative models cluster top-left, aggressive ones bottom-right. Top-right is ideal.

The precision-recall scatter reveals distinct model strategies. Precision-biased models (upper-left) are conservative — they miss some sponsors but rarely make false claims. Recall-biased models (lower-right) catch more sponsors but produce more false positives.

For a skip-ahead UX like Cutio's, high recall is critical — missed sponsors degrade the user experience more than occasional false skips, which users can easily undo. But precision below ~80% creates an annoyingly trigger-happy experience. The ideal operating point is the top-right corner: high in both dimensions.

> The Hard/Soft Gap

The most striking finding: soft segments are nearly impossible for every model. These are segments where reference annotators disagreed and a judge had to arbitrate. If even frontier models can't agree on whether something is an ad, it's unsurprising that test models struggle too.

A structural factor amplifies this effect: because models don't distinguish "hard" from "soft" predictions, all unmatched predictions count as false positives against the small pool of soft GT segments (only 8 out of 95). This makes soft precision inherently near-zero — the metric captures genuine difficulty but also reflects the imbalance between tiers.

| Model | Hard F1 | Soft F1 | Gap |

|---|---|---|---|

| gemini-3.1-flash-lite | 85.9% | 6.1% | 79.8pp |

| qwen-3.5-flash | 85.1% | 6.3% | 78.7pp |

| gemini-3-flash | 84.5% | 7.4% | 77.1pp |

| claude-haiku-4.5 | 83.7% | 2.1% | 81.6pp |

| gpt-5.4-nano | 80.6% | 8.9% | 71.7pp |

Hard F1 ranges from 80.6% to 85.9%, while soft F1 never exceeds 8.9%. This suggests the benchmark's difficulty is bimodal — clear-cut segments are solved well, but ambiguous edge cases remain open. The soft tier effectively measures a model's ability to handle content that even experts disagree on.

> Timing Accuracy

Beyond detection, where a model places segment boundaries matters. A correctly detected segment with sloppy boundaries creates a jarring skip experience. We measure MAE (Mean Absolute Error) for start and end timestamps separately.

| Model | MAE Start | MAE End | Ratio |

|---|---|---|---|

| gemini-3-flash | 2.9s | 2.0s | 1.4× |

| qwen-3.5-flash | 3.8s | 1.9s | 1.9× |

| gemini-3.1-flash-lite | 3.9s | 2.0s | 1.9× |

| claude-haiku-4.5 | 4.0s | 2.3s | 1.8× |

| gpt-5.4-nano | 5.1s | 1.5s | 3.4× |

Start boundaries are consistently ~2× harder than end boundaries across all models. This makes intuitive sense: sponsor segments often begin with a natural transition ("speaking of which..."), while endings are marked by clearer signals ("anyway, back to..."). The prompt instructs models to start 2–3 seconds before the transition phrase and include music gaps — this ambiguity makes start detection inherently noisier.

> Cost-Performance Frontier

For a production service processing thousands of videos daily, cost per video is a critical dimension. The spread across models is dramatic — a 8× cost difference between the cheapest and most expensive model.

> Cost vs F1

Cost-performance Pareto frontier. Top-left corner is the sweet spot.

> Cost per Video

API cost for a single video analysis, sorted cheapest first.

> Latency

Response time in seconds. Solid = median, faded = p95.

The cost-efficiency sweet spot belongs to qwen-3.5-flash at 364 F1-points per dollar. The raw accuracy leader gemini-3.1-flash-lite costs 2.5× more per video for a 0.8% F1 improvement — a steep marginal cost that may or may not be justified depending on precision requirements.

> Limitations

- • Dataset size. 65 videos is enough to surface broad patterns but too small for fine-grained statistical claims. Bootstrap CI widths of ~12–16 pp reflect this — differences smaller than that may be noise.

- • LLM-as-ground-truth. The gold GT itself is produced by AI models, not human annotators. While the multi-model consensus protocol with Krippendorff's α = 0.856 suggests high internal reliability, systematic biases shared by all reference models would be invisible.

- • Single-pass evaluation. Each model runs once per video at temperature 0.3 with a uniform reasoning budget (1024 thinking tokens) and a 4096-token output cap. No ensembling or multi-pass aggregation is used.

- • Pricing volatility. API costs are based on published rates at time of evaluation and may change.